Machine learning: Prediction suicidal behaviour based on drug abuse and mental health

There is some well-known correlation between certain mental disorders and suicidal ideation or suicidal behaviour.

I was interested in whether a machine learning model could be trained to identify suicidal behaviour based on mental health, biological health, and drug abuse questions.

The dataset I was working with came from the public Criminal Justice Drug Abuse Treatment Studies (CJ-DATS): The Criminal Justice Co-Occurring Disorder Screening Instrument (CJ-CODSI) and had new 353 new admissions to a prison-based substance abuse treatment program (137 Whites, 96 African Americans, and 120 Latinos).

The questionnaire, created by psychologists, overall was very detailed with 789 attributes, either direct data input or compound fields, based on other fields.

The variable I wanted to forecast was called SATTLF (Suicide Attempts Lifetime) and to make sure that no suicide or suicide-related questions were left in the source data, I removed 164 fields (including SATTLF), which directly or indirectly referred to suicide.

I built two models, a simple decision tree and a random forest scikit-learn Python module.

The models were reasonably easy to build, although the optimal number of estimators had to be fine-tuned for the random forest model. Interestingly, the random forest did not outperform the simple decision tree as much as I thought it would have.

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.tree import DecisionTreeClassifier

data = pd.read_csv('27963-0002-Data.tsv', sep='\t')

y = data['SATTLF'] == 1

feature_names = [i for i in data.columns if data[i].dtype in [np.int64]]

suicide_features = ['SATTLF', 'mhsf28a', [...] 'oaccodss']

for sf in suicide_features:

feature_names.remove(sf)

X = data[feature_names]

train_X, val_X, train_y, val_y = train_test_split(X, y, random_state=1, test_size=0.2)

my_model = RandomForestClassifier(random_state=0, warm_start=True, oob_score=True, n_estimators=3, max_features="auto").fit(train_X, train_y)

my_dec_tree_model = DecisionTreeClassifier(random_state=0, max_depth=10, min_samples_split=5, min_samples_leaf=3).fit(train_X, train_y)

Overall, the models were reasonably accurate, compared to the difficulty of the problems. The decision tree had an F1 score of 0.6 (precision: 0.562, recall: 0.643), while the random forest had an F1 score of 0.643 (precision: 0.643, recall: 0.643). In other words, both models correctly identified 9 people out of 14 (64.3%) who acted on their suicidal thoughts (total number of people in the validation dataset: 71). It is important to highlight, that the model did not just identify suicidal thoughts, but actual behaviour, as in, acting on these thoughts, which is more difficult to predict.

Some attributes in the random forest that correlated to the final score (note: none of these in themselves are enough for a prediction, their interaction matters)

psychhosp - Psychiatric hospitalisations (0 / 1). A low value (0) was a moderator, while high value (1) seemed to significantly boost the suicide attempt score.

mhsfto13 - Score of 13 or higher on MHSF (18 different psychopathology questions). A low value (0) was a moderator, while high value (1) seemed to boost the suicide attempt score.

tcuds - Drug use questionnaire, score between 0-9. Higher scores seemed to boost the suicide attempt score.

AGE1HER - Age 1st Heroin Use, low score (missing values) is a protective factor.

pdborder - Personality Disorders- Borderline, score between 0-9. Low and middle scores seemed to be neutral, higher scores seemed to significantly contribute to the overall score.

A sample SHAP plot for a subject in the dataset:

As apparent, physiatric hospitalisation, heroin use at age of 18, and 5 out of 9 borderline behaviours significantly contributed to the overall score. On the other hand, not using crack in the last 6 months, finishing 12 grade, and scoring only 3 on the TCUDS drug questionnaire were protective factors, yielding an overall score of 0.33.

There were two interesting attributes that seemed to have a cutoff value: the highest grade finished and borderline behaviour score. It appears that finishing 11th grade or higher, or having a score of 4 or lower on the borderline behaviour scale is a protective factor in terms of suicide behaviour.

What is salient, and difficult to explain, is that according to the Diagnostic and Statistical Manual of Mental Disorders (DSM–5), a person meets the diagnostic criteria for borderline personality disorder if their behaviour matches 5 or more out of the 9 behavioural patterns that are typical with this disorder. It is interesting, that the model built on the data in our case had that significant increase exactly where the DSM-5 draws the line, even thought there is nothing specific about that value. One explanation could be, that clinicians intentionally rounded up values to 5 if they felt that the patient met the overall diagnosis; however, there are ample of inmates with value of 4, so this might not hold. This finding warrants further research.

The full source code is available as a Python notebook on Github.

If you have suicidal thoughts, give a call to a local support centre in your country. Personal Psychology psychologists in North Sydney, also offers therapy, if you are local.

You can find phone numbers here or here.

I was interested in whether a machine learning model could be trained to identify suicidal behaviour based on mental health, biological health, and drug abuse questions.

The dataset I was working with came from the public Criminal Justice Drug Abuse Treatment Studies (CJ-DATS): The Criminal Justice Co-Occurring Disorder Screening Instrument (CJ-CODSI) and had new 353 new admissions to a prison-based substance abuse treatment program (137 Whites, 96 African Americans, and 120 Latinos).

The questionnaire, created by psychologists, overall was very detailed with 789 attributes, either direct data input or compound fields, based on other fields.

The variable I wanted to forecast was called SATTLF (Suicide Attempts Lifetime) and to make sure that no suicide or suicide-related questions were left in the source data, I removed 164 fields (including SATTLF), which directly or indirectly referred to suicide.

I built two models, a simple decision tree and a random forest scikit-learn Python module.

Building the models

The models were reasonably easy to build, although the optimal number of estimators had to be fine-tuned for the random forest model. Interestingly, the random forest did not outperform the simple decision tree as much as I thought it would have.

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.ensemble import RandomForestClassifier

from sklearn.tree import DecisionTreeClassifier

data = pd.read_csv('27963-0002-Data.tsv', sep='\t')

y = data['SATTLF'] == 1

feature_names = [i for i in data.columns if data[i].dtype in [np.int64]]

suicide_features = ['SATTLF', 'mhsf28a', [...] 'oaccodss']

for sf in suicide_features:

feature_names.remove(sf)

X = data[feature_names]

train_X, val_X, train_y, val_y = train_test_split(X, y, random_state=1, test_size=0.2)

my_model = RandomForestClassifier(random_state=0, warm_start=True, oob_score=True, n_estimators=3, max_features="auto").fit(train_X, train_y)

my_dec_tree_model = DecisionTreeClassifier(random_state=0, max_depth=10, min_samples_split=5, min_samples_leaf=3).fit(train_X, train_y)

Results

Overall, the models were reasonably accurate, compared to the difficulty of the problems. The decision tree had an F1 score of 0.6 (precision: 0.562, recall: 0.643), while the random forest had an F1 score of 0.643 (precision: 0.643, recall: 0.643). In other words, both models correctly identified 9 people out of 14 (64.3%) who acted on their suicidal thoughts (total number of people in the validation dataset: 71). It is important to highlight, that the model did not just identify suicidal thoughts, but actual behaviour, as in, acting on these thoughts, which is more difficult to predict.

|

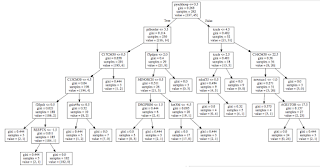

| Decision tree from my_dec_tree_model |

Some attributes in the random forest that correlated to the final score (note: none of these in themselves are enough for a prediction, their interaction matters)

|

| SHAP summary for my_model (random forest) |

psychhosp - Psychiatric hospitalisations (0 / 1). A low value (0) was a moderator, while high value (1) seemed to significantly boost the suicide attempt score.

mhsfto13 - Score of 13 or higher on MHSF (18 different psychopathology questions). A low value (0) was a moderator, while high value (1) seemed to boost the suicide attempt score.

tcuds - Drug use questionnaire, score between 0-9. Higher scores seemed to boost the suicide attempt score.

AGE1HER - Age 1st Heroin Use, low score (missing values) is a protective factor.

pdborder - Personality Disorders- Borderline, score between 0-9. Low and middle scores seemed to be neutral, higher scores seemed to significantly contribute to the overall score.

A sample SHAP plot for a subject in the dataset:

|

| Single SHAP plot for subject #47 |

As apparent, physiatric hospitalisation, heroin use at age of 18, and 5 out of 9 borderline behaviours significantly contributed to the overall score. On the other hand, not using crack in the last 6 months, finishing 12 grade, and scoring only 3 on the TCUDS drug questionnaire were protective factors, yielding an overall score of 0.33.

Dependence plots

There were two interesting attributes that seemed to have a cutoff value: the highest grade finished and borderline behaviour score. It appears that finishing 11th grade or higher, or having a score of 4 or lower on the borderline behaviour scale is a protective factor in terms of suicide behaviour.

What is salient, and difficult to explain, is that according to the Diagnostic and Statistical Manual of Mental Disorders (DSM–5), a person meets the diagnostic criteria for borderline personality disorder if their behaviour matches 5 or more out of the 9 behavioural patterns that are typical with this disorder. It is interesting, that the model built on the data in our case had that significant increase exactly where the DSM-5 draws the line, even thought there is nothing specific about that value. One explanation could be, that clinicians intentionally rounded up values to 5 if they felt that the patient met the overall diagnosis; however, there are ample of inmates with value of 4, so this might not hold. This finding warrants further research.

Code

The full source code is available as a Python notebook on Github.

Reach out

You can find phone numbers here or here.

Comments

Post a Comment